VIRTUOS EXPERT TALKS #11

The eleventh in a series of regular articles highlighting learnings and best practices from Virtuos’ global development, Art, and VFX teams.

Introduction

As PC and console hardware continues to improve, games are able to render increasingly higher poly-count meshes and higher resolution textures in game engines, thus allowing game designers to create ever more realistic environments in AAA titles. However, the process of creating these ultra-quality game assets has similarly increased in time and complexity, especially if the goal is to make them as lifelike as possible.

In hindsight, the pivot to photogrammetry is almost a logical progression, due in part to increased demand for ultra-realistic in-game assets and the continuous evolution of game engines. Now, anyone can take a series of photos, process them and produce an extremely realistic-looking model with matching textures in the span of just hours to a few days’ time, ready for use as a game asset.

Photogrammetry has been around for many years, but it wasn’t until it was publicized as a success story in the making of Star Wars Battlefront at the Game Developers Conference (GDC) four years ago, that more and more developers have begun looking into it as their next tool in their arsenal. Virtuos has similarly made progress with photogrammetry within its various studios and is currently using the technology to produce highly detailed environments in various end-to-end game production projects.

The Inception of Photogrammetry at Sparx*

A significant part of Virtuos’ photogrammetry activity is performed at Sparx* – a Virtuos studio located in Ho Chi Minh City, Vietnam. Known for its visual effects work in major blockbuster films such as Star Wars, Sparx* possesses the talent and the necessary equipment to jumpstart our exploration of photogrammetry.

Although Sparx* had been using photogrammetry for film work for quite some time, it was mainly used as an accurate guide for modeling real-world objects; the actual model was created by hand afterward, with its textures re-projected from the photos taken of its real-life counterpart.

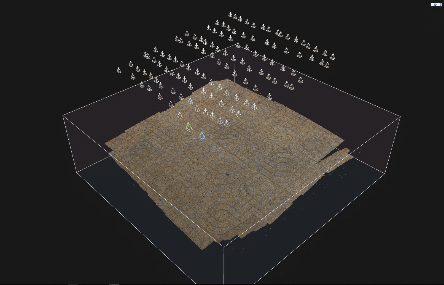

It wasn’t until late 2018 when the studio processed and de-lit data and then rebuilt entire locations in Unreal for the first time for Disney Plus’s “The Mandalorian”, that photogrammetry was seriously considered as a tool for creating realistic 3D objects efficiently. The years that followed saw Sparx* devote more time and resources to researching and testing the technology, simultaneously building up the process and acquiring the necessary hardware.

At this moment, Sparx* is already using photogrammetry extensively on a number of yet-to-be-released game projects. Leading the charge are Kristian Pedlow, Senior Art Director, and Minh Son, Senior Technical Artist. They are members of the ‘Photogrammetry Strike Team’ at Sparx*, a team of staff responsible for all things photogrammetry within the studio.

Pictured: Kristian (left) and Minh Son (right).

Capturing Reality: How Sparx* Acquires Scans

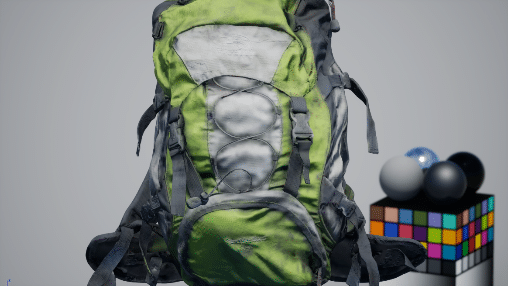

Before heading out into the world to conduct photogrammetry scans, the Sparx* Photogrammetry Strike Team first assembles a collection of equipment necessary for the acquisition process:

- A full-frame body camera (the team currently favors a Sony A7II)

- A decent full-frame prime lens and zoom lens (28-70mm zoom lens are the present choice)

- An X-rite Color Checker

- A ring flashlight, to ensure the subject is properly lit

- Light diffusers (to avoid the need to delight hotspots in photographs)

- A foliage cutter

- Flags to pin on objects when scouting and or Google Maps

- Slates and a ruler for naming objects and scale cue

- A handheld flashlight (for foliage)

- Two orbs – in chrome and grey

- Black-colored cloth for use when keying out objects (black works better than green or blue)

Minh Son: “A typical expedition will last from a few days to a week, depending on how large the landscape is and how many assets we want to acquire in the chosen biome.

We usually begin by spending up to a full day to scout the area that we chose, during which we would make a list of all the assets that we would need. Then we’ll spend the next few days systematically capturing all of them, making sure that we don’t miss out on anything.

Before the acquisition of each asset, whether indoors or outdoors, we would first take a photo of it with a Color Checker for white balance and color correction later. Another photo would then be taken with a rule to get an idea of the asset’s scale.”

Once the preliminary photos are taken, the capture can begin properly. There are three main categories of scans to capture in these expeditions: Assets, Surfaces, and Foliage/Atlas.

Assets

In the context of photogrammetry, Assets are real-world objects that are scanned and processed individually.

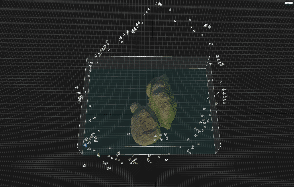

First, the Strike Team captures an overall view of the asset, to make sure that no part is missing when they proceed with image alignment, and to minimize the need for manual alignment. Next, the camera is moved up close against the asset to capture every single detail possible; a third-round may be required if the asset possesses some sharp corners that need focusing on.

Minh Son: “In cases where hard shadows appear like in the above example, we would try to capture with a polarization filter which may help in reducing the directional light intensity somewhat, although a delighting phase is still necessary later on in the process.

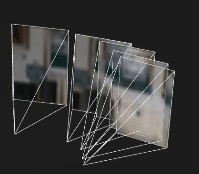

For proper capturing, we make sure to always move the camera between images. Panning or rotating the camera on a stationary tripod without moving it will sometimes cause duplicate geometry with a slight offset. I would also recommend avoiding camera groupings like the ones below:”

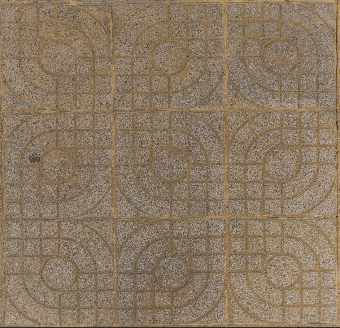

Surfaces

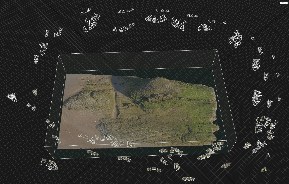

Environment surfaces are also acquired via photogrammetry when there’s a need to capture the surrounding landscape.

For surface acquisition, the team uses a tripod or monopod to attach the camera, ensuring that the camera is always perpendicular with the surface, as in the image below.

Minh Son: “We are also testing acquisition with a prime lens, since the quality of its output is better than a zoom lens. In this example, we’re using a Sigma 50mm f/1.4 EX DG HSM to capture the surface.

Aside from that, we also capture another overview image using a wide-zoom lens. This is not only for scaling purposes, but also to generate a PBR material based on a single image – if needed – by using Substance Alchemist or Unity Art Engine. The example below shows a processed image captured using a Sigma 12-24mm f/4.5-5.6 EX DG HSM.”

Foliage/Atlas

Foliage atlas captures are frequently done to provide accurate scans of plants without too much storage overhead. Previously, the team would use a cutter to trim a few branches and twigs, storing them in zip lock bags and bringing the samples back to the studio for further acquisition. But they soon realized that it was much more efficient to perform the acquisition onsite whenever possible, by putting the atlas on top of a blue screen and using a handheld light to illuminate it from 8 angles. While this has worked fine so far, the team is considering to switch the blue screen with a simple grey tarp instead, which may be a better choice due to the fact that objects on the blue screen might receive a blue cast that could be difficult to remove.

Minh Son: “Lighting conditions are our main obstacle during the acquisition process. Overcast cloudy days are ideal for photogrammetry, but because of the weather in Vietnam, we mostly find ourselves shooting on sunny days. This means we have to deal with hard shadows and over-exposure lighting, especially in the forest when there are areas covered by trees – sometimes we find ourselves capturing assets in shady areas with some brightly lit spots in between.

To deal with this, we try to illuminate the area with our ring flashlight, and also capture everything in RAW image format so that we can deal with shadows and highlights in Photoshop / Lightroom during processing.”

Bringing It Together: Processing the Scans

Once the team returns from a shoot, the data will need to be cataloged and processed in the studio, turning the acquisitions into 3D objects ready to use in-game engines like Unity and Unreal.

Kristian: “During processing, it is possible to find that some acquisitions will have details that were out of focus or in shadow, making them unusable. To try and counter this, we do as much processing as possible on site: we capture an asset, swap out our cameras SD cards, and do a quick image align on a laptop that can do the processing on the spot. This way, we can ensure a high-quality capture and eliminate the need to return to the same location the following day.”

Minh Son: “For processing images, we currently use Photoshop to colour correct, then run it through Reality Capture (RC) to rebuild the mesh. After the processing, sometimes we were able to simply cut out the mesh, reduce the polycount in RC then export the low poly and high poly for map baking. For some cases, we would import it into ZBrush for more touch-ups, like filling out the holes on an object or slicing it into modular pieces.

The process of baking maps usually happens in Substance Designer/Painter and xNormal since these software programs, especially Substance Painter and xNormal, can handle up to more than 200m of tris meshes.

After the baking process, we would then run the base color texture through a de-lighting process, which removes the shadows and highlights in textures. Based on the assets being worked on, we would use either Unity De-Lighting tool or Agisoft De-Lighter.

At this point, the assets would be stored in a domestic asset library for future use.”

The Future of Photogrammetry at Sparx* and Virtuos

The Photogrammetry Strike Team is – for now – in its nascent stages of establishment. The team itself is mainly comprised of senior environment artists who already have experience with projects that require photogrammetry and possess the know-how to go out and capture scans that their clients would ask for.

In time to come, the team has plans to flesh out its roster, training up more people in the process, and install team leads to take charge of the entire process. The end state of the Strike Team would include artists dedicated to photogrammetry as a discipline.

Meanwhile, Sparx* continues to improve on its range of photogrammetry services. While using photogrammetry to augment its own asset creation capabilities was the main catalyst for the studio’s adoption of the technique, Sparx* has begun offering our clients the ability to pick specific biomes and assets to replicate via photogrammetry. For now, only environments native to Vietnam are available due to restrictions imposed by the COVID-19 pandemic, but ideally, the team is fully capable to travel to nearby countries to access a wider range of source material.

This, combined with the studio’s continuous efforts to upgrade both its manpower and quality of equipment, indicates aggressive incorporation of photogrammetry as part of Virtuos’ array of End-to-End game development services.

- For more News about Virtuos, please visit: www.virtuosgames.com/en/news

- For business enquiries, please contact: [email protected]

About Virtuos

Founded in 2004, Virtuos Holdings Pte. Ltd. is a leading videogame content production company with operations in Singapore, China, Vietnam, Canada, France, Japan, Ireland, and the United States. With 1,600 full-time professionals, Virtuos specializes in game development and 3D art production for AAA consoles, PC, and mobile titles, enabling its customers to generate additional revenue and achieve operational efficiency. For over a decade, Virtuos has successfully delivered high-quality content for more than 1,300 projects and its customers include 18 of the top 20 digital entertainment companies worldwide. For more information, please visit: www.virtuosgames.com

About Sparx*

Sparx* is one of the top studios in Asia, providing large-scale production services, creating a superb range of solutions for the highest quality 3D Art, Visual effects (VFX) & Animation. Acquired by Virtuos – one of the world’s largest digital content providers – in 2011, Sparx* has 450 professional artists working on all of the latest tools, engines & platforms. For more information, please visit www.sparx.com